TL;DR:

- Prediction identifies at-risk employees but does not explain the underlying reasons.

- Combining predictive analytics with qualitative insights and explainable AI improves retention strategies.

- Effective retention requires personalized interventions based on understanding each employee’s unique context.

There’s a seductive comfort in prediction. You pull the data, run the model, and a dashboard tells you that three people on your team are “high flight risks.” You feel like you’re ahead of the problem. But here’s what that number doesn’t tell you: one of those three is burned out and needs relief from an impossible workload, another is quietly job hunting because they feel invisible to leadership, and the third is actually fine but goes through a low-engagement cycle every Q4. Same risk score. Three completely different problems. Three completely different solutions.

Table of Contents

- Prediction vs. understanding: Why the difference matters

- How predictive retention models work—and their limits

- Unlocking understanding: Qualitative methods, XAI, and contextual insights

- From confusion to clarity: Actionable retention frameworks for leaders

- Our perspective: Where executives get retention wrong—and what actually works

- How OpenElevator empowers next-level retention strategies

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Prediction vs. understanding | Predicting who may leave and understanding why are different—and executives must use both for effective retention. |

| Limits of black-box models | Opaque predictive algorithms can signal risk but rarely reveal actionable causes, leading to poor HR decisions. |

| Qualitative insights matter | Combining explainable AI with interviews uncovers root issues and enables responses that move the needle. |

| Frameworks drive action | Practical strategies integrate prediction, causal understanding, and personalized interventions for better outcomes. |

| Personalization is essential | Generic interventions fail; tailored actions based on context and history deliver results. |

Prediction vs. understanding: Why the difference matters

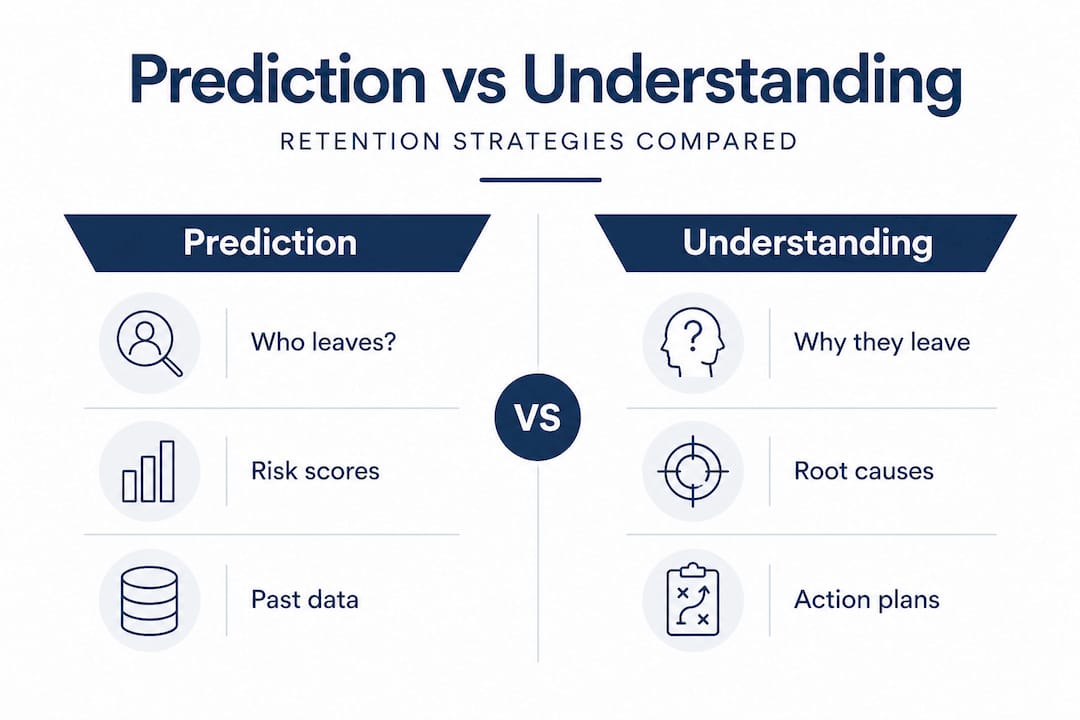

Let’s get precise about language, because sloppy language leads to sloppy strategy. Prediction and understanding are not the same thing, and treating them as interchangeable is one of the most expensive mistakes an executive can make in HR.

Prediction is the act of using historical and behavioral data to identify which employees are statistically likely to leave. It answers the question: who? It’s powerful as a triage tool, a way to direct your attention before someone hands in their notice.

Understanding is what happens after that. It investigates the why. It looks at root causes, contextual factors, and the specific motivations that are driving a person toward the exit. As churn research clearly shows, prediction identifies who is likely to leave but does not explain why, while understanding reveals root causes for actionable interventions.

Confusing these two is like using a smoke detector to diagnose a fire. The alarm tells you something is wrong. It doesn’t tell you whether the kitchen is burning, the wiring is faulty, or someone left a candle unattended. If you grab the wrong extinguisher, you make it worse.

Here’s a quick way to see the contrast:

| Dimension | Prediction | Understanding |

|---|---|---|

| Core question | Who is at risk? | Why are they at risk? |

| Data type | Quantitative, historical | Qualitative, contextual |

| Output | Risk score or flag | Root cause and action |

| Best used for | Prioritizing attention | Designing interventions |

| Limitation | No causal clarity | Harder to scale quickly |

Executives who rely only on prediction end up chasing flags without clarity. They might offer a blanket pay raise to every flagged employee, only to discover that money wasn’t the issue for half of them. Or they schedule one-on-ones that feel performative because the manager doesn’t actually know what to say.

The key insight? You need both. And evidence-based retention frameworks consistently show that organizations who pair predictive analytics with genuine qualitative inquiry outperform those who rely on data alone. This is the foundation on which smart retention strategy is built.

How predictive retention models work—and their limits

Predictive models in HR work by training machine learning algorithms on historical employee data: tenure, performance reviews, engagement survey scores, absenteeism, promotion history, salary relative to market, and more. The model learns patterns from employees who previously left and uses those patterns to score current employees on their likelihood of departure.

On paper, that sounds like exactly what you’d want. In practice, it’s more complicated.

The accuracy benchmarks are genuinely impressive. Most models achieve 80 to 96% accuracy, though precision and recall often land between 60 and 90%, which matters a great deal when you’re designing expensive interventions. Think about it this way: if your model flags 20 people as high risk and only 14 of them were actually going to leave, you’ve wasted resources on six people who weren’t going anywhere. And if it missed four people who did leave, that’s a real cost with no warning.

But the deeper problem isn’t accuracy. It’s opacity. Black-box machine learning models predict accurately but lack transparency into the factors driving those predictions, which fundamentally limits what HR leaders can actually do with the output.

Think about the signal you get: “Employee X has a 78% probability of leaving in the next 90 days.” What do you do with that? The model knows. But it’s not telling you. It’s a locked room with a blinking red light outside.

Here’s where the real danger lives:

- Misinformed interventions. Leaders respond with generic retention moves, a pay bump, a new title, a one-on-one, without knowing whether any of those things address the actual problem.

- Over-reliance on scores. Once a number exists, people treat it as truth. The nuance of a person gets flattened into a percentage.

- Ethical blind spots. Acting on a prediction can feel intrusive to employees who haven’t expressed any concern, and mishandled outreach can accelerate the departure you were trying to prevent.

Early action based on predictions does deliver better ROI than scrambling after the resignation letter arrives, and global mobility research shows that proactive talent retention strategies consistently outperform reactive ones. The key is treating the prediction as a starting point, not an endpoint.

Pro Tip: Never treat a risk score as a conclusion. Treat it as a question. The number tells you where to look. Your job is to figure out what you’re looking at once you get there.

Unlocking understanding: Qualitative methods, XAI, and contextual insights

So if prediction opens the door, understanding is what you do when you walk through it. This is where things get genuinely interesting, and where most organizations are still playing catch-up.

Predictive models alone are insufficient because they lack the qualitative methods, like structured interviews and contextual conversations, needed to surface causal mechanisms. The data shows a pattern. The conversation reveals the story behind it.

There are three primary tools for building real understanding:

-

Qualitative interviews and stay conversations. These are structured discussions designed to uncover what an employee values, what frustrates them, and what would make them stay. They’re not performance reviews in disguise. Done well, they feel like genuine listening, and they surface information no algorithm can infer.

-

Explainable AI (XAI) tools, particularly SHAP. SHAP (SHapley Additive exPlanations) is a method that breaks open the black box. Instead of just giving you a risk score, XAI tools like SHAP attribute predictions to specific features, showing which factors contributed most to a particular employee’s score. Is it their recent performance dip? Their tenure hitting a two-year inflection point? Their shift from full-time to hybrid? This transforms the prediction from a number into a narrative.

-

Contextual judgment. This is the human layer that neither qualitative data nor XAI can fully replace. A manager who knows that an employee just moved cities, is dealing with a difficult team dynamic, or recently lost out on a promotion brings essential context that no model contains.

Here’s an edge case worth sitting with: imagine two employees with identical risk scores. Both have been quieter lately in meetings, both had slightly lower performance ratings last quarter, and both flagged neutral on their last engagement survey. But one just had a baby and is exhausted, navigating a transition they haven’t spoken up about. The other is actively interviewing and has been approached by a competitor. Same signal. Completely different interventions required.

“Feature importance shows relevance but not direction,” as one key study notes, meaning a model can tell you that ‘workload’ is a factor without telling you whether more or less of it would help. Without context, you could easily prescribe the wrong thing.

This is where customized HR responses grounded in people science, rather than generic wellness programs or across-the-board raises, create a measurable difference. The organizations getting this right are the ones pairing their dashboards with discipline: regular qualitative touchpoints, explainable model outputs, and managers trained to interpret both.

Pro Tip: Don’t run XAI and qualitative interviews as separate tracks. Feed what the interviews reveal back into how you interpret XAI output. They sharpen each other. Used in tandem, they create a picture that neither produces alone.

From confusion to clarity: Actionable retention frameworks for leaders

Frameworks only work when they’re honest about what they’re solving. Here’s the frame I’d suggest for any executive looking to move from reactive retention to genuine retention strategy.

The three-step loop:

- Identify the risk. Use your predictive model to flag employees crossing a risk threshold. Set a clear threshold based on your model’s precision, not just accuracy, so you’re working with a manageable and meaningful list.

- Uncover the causes. For every flagged employee, initiate a qualitative touchpoint through a stay interview, a meaningful one-on-one with their manager, or a structured conversation with HR. Use XAI output to give that conversation direction. You’re not going in blind; you’re going in with hypotheses to test.

- Customize the intervention. Based on what you learn, design a response that actually fits. A workload reduction. A development conversation. A recognition initiative. A team restructure. The intervention must match the person, not just the score.

Beyond the loop, there are critical pitfalls to avoid:

- Avoid one-size-fits-all responses. Offering every flagged employee a pay review is expensive, sometimes counterproductive, and signals to people that you’re following a script rather than genuinely listening.

- Avoid over-indexing on the prediction model alone. Use it to prioritize your attention, not to define your response.

- Build feedback cycles. After each intervention, track the outcome. Did the person stay? Did engagement improve? This data makes your next prediction model more accurate and your future interventions sharper.

The same risk signal can require two completely different responses, based on the employee’s individual history, their relationship with their manager, and the broader team context. A quiet employee who was recently passed over for promotion needs a very different conversation than a quiet employee who just returned from parental leave.

Stay interviews, specifically, are underused and undervalued. Unlike exit interviews, which are essentially post-mortems, stay interviews happen while there’s still something to preserve. They ask employees directly: What keeps you here? What might pull you away? What would make your experience significantly better? The answers, when taken seriously, are often startlingly actionable.

Strategic talent retention at a portfolio level requires treating these frameworks as living systems, not one-off projects. Workforce dynamics shift. What motivated your team two years ago may be entirely different today. Revisit your frameworks quarterly. Treat retention strategy with the same rigor you apply to revenue strategy.

Pro Tip: Assign ownership. Retention frameworks fail when they exist in PowerPoint decks but not in anyone’s KPIs. Someone on your leadership team needs to own the loop: prediction review, qualitative cadence, intervention tracking.

Our perspective: Where executives get retention wrong—and what actually works

Here’s a candid truth I want to share, because I’ve seen this pattern repeat across organizations of all shapes and sizes. Executives fall in love with prediction models because they feel like control. There’s a dashboard, there’s a score, there’s a number to manage. It scratches the itch for certainty in what is fundamentally a human and therefore uncertain domain.

But the business impact of turnover doesn’t come from poor prediction. It comes from poor understanding. You can know, with 90% accuracy, that someone is likely to leave in 60 days, and still lose them because you didn’t understand why and responded with something entirely beside the point.

The most impactful retention moments I’ve seen come from listening. Not from algorithms, though they have a critical role. From a manager who actually heard an employee say “I don’t feel like I’m growing here” and responded with a real development plan rather than a hollow reassurance. The algorithm pointed the flashlight. The conversation was where the real work happened.

What actually works is the combination: use your retention solutions to build early visibility, use explainable AI to give that visibility meaning, and then back it up with genuine human inquiry. That’s not a soft approach. It’s actually the hardest one to execute, because it requires discipline, training, and consistent follow-through.

The leaders who get this right stop asking “who is going to leave?” as their terminal question. They start asking “what do our people actually need, and are we delivering it?” The prediction model helps them know where to ask. The understanding tools help them know what they’re hearing when someone answers.

How OpenElevator empowers next-level retention strategies

If reading this has surfaced a gap between where your retention strategy is today and where it needs to be, that’s exactly the tension worth sitting with.

OpenElevator was built precisely for this moment in your thinking. It adds the visibility layer that HR systems and engagement tools were never designed to provide: clear, quantifiable insight into who is at risk, why the risk is building, and what interventions are most likely to work given your specific team context. It doesn’t replace the qualitative judgment your leaders bring. It gives that judgment something real to work with. Early warning signals, actionable recommendations, and predictive hiring fit so you’re solving for retention before it becomes a crisis, not after.

Frequently asked questions

Can predictive models alone reduce employee turnover?

No. Predictive models can identify who is at risk, but qualitative methods and explainable insights are what enable effective, targeted interventions that actually address the root cause.

What is SHAP and why does it matter for retention strategies?

SHAP is an explainable AI method that shows which factors drive an individual employee’s risk score. XAI tools like SHAP make predictions actionable by attributing them to specific, interpretable features rather than leaving leaders with an opaque number.

How accurate are employee retention prediction models?

Most models achieve 80 to 96% overall accuracy, but precision and recall frequently drop to 60 to 90%, which means intervention planning based on model output alone carries meaningful risk of mismatch.

Why are personalized interventions important for retention?

Because the same risk signal can stem from completely different causes in different employees. A generic response ignores this, and worse, it can signal to people that leadership is following a process rather than actually caring about them as individuals.

What’s the optimal way for executives to combine prediction and understanding?

Use prediction to prioritize your attention and flag who needs focus, then layer in explainable AI output and structured stay interviews to design interventions that fit the actual person, not just the score.